The web is all around us. With new technologies like WebGL, you can unlock great performance for real-time graphics with very little effort. Throw together some code in Javascript and some shaders, upload it to a web server, and you can have people around the world experience it.

I work for a Santa Monica-based production company called Tool. Recently, we decided to throw a party for employees and friends, and decided that the large venue would work great with projections. While developing the project, we realized that it would be great if the visuals were interactive. By using the Leap Motion Controller, our guests could interact with the visuals using one hand while holding a drink in the other.

To create the projection, we used a combination of several web APIs. We prepared the demo for an event where it was projected on a wall, so cross-browser compatibility was not a top issue for us. Currently, the demo works only on Chrome. However, since most of the code uses standard APIs, it should work on other browsers after some minor modifications.

The technologies we used include WebGL for rendering, Web Audio API for playback and sound analysis, and WebRTC to handle microphone input. Last but not least, we used LeapJS to interpret data from the device. Today, I’m going to show you how it all fits together – including smoothing out the data and gauging how open or closed a hand is. To see the demo in action, check it out on Tool’s website. You can also dive into the complete source code on GitHub.

Visuals with WebGL

WebGL is used for the most visible part of the demo – the 3D graphics. We built everything from scratch using a custom engine, and all of the visuals rely on dynamic geometry generated with code and custom GLSL shaders.

Dynamic geometry

All shapes are created dynamically with code. While this may seem like a lot of extra work compared to modeling them in Blender or C4D, it’s actually a lot more flexible. The shapes are simple, so they were relatively easier to handle. However, the code needs to be written carefully, because even simple geometries tend to result in pretty complex code. During the production of the demo, I had to refactor my whole procedural mesh generation code at one point, to make sure we didn’t end up with a mess that would be hard to debug.

Custom shaders

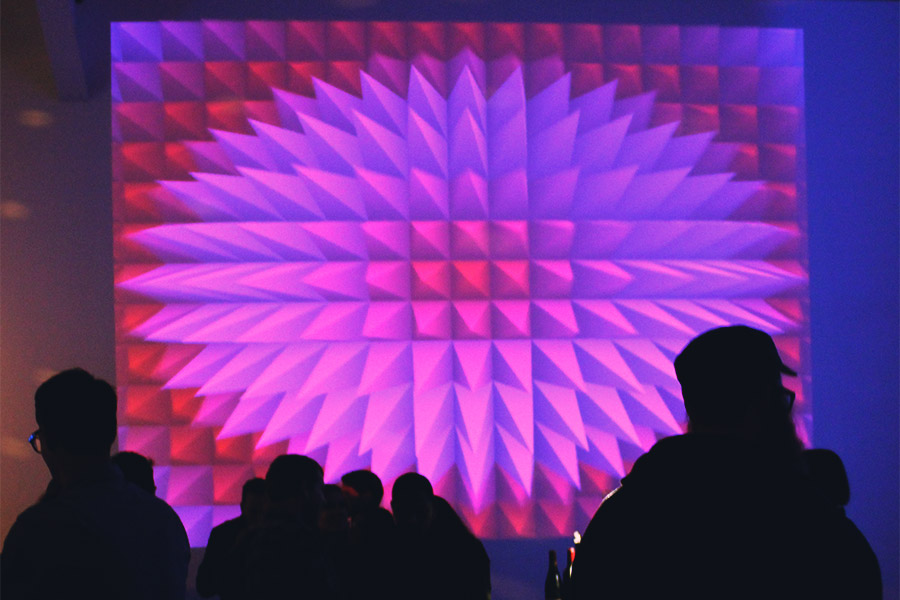

Every rendered object runs on a custom shader. The demo isn’t built within the framework of a classic 3D engine, which has lights and built-in types of materials. Instead, every object has it’s own custom shader that manages its color, lighting, and some elements of the animation. In the city, the buildings, floors, and windows are created procedurally inside a shader. The purple pyramids have a pseudo-ambient occlusion effect that’s built into their shader as well.

It’s so simple that it doesn’t impact the performance in any way. A more robust but complicated system like SSAO would be overkill, and might negatively impact performance in this case.

Post effects

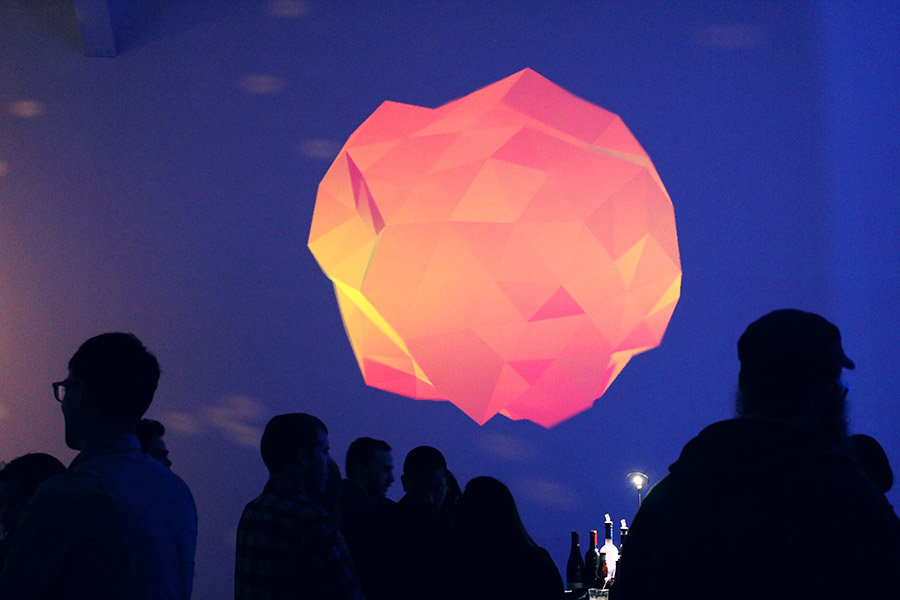

On top of the shaders used to render individual objects, almost all the demos have fullscreen post effects applied – like the scan lines in the animated cube, or the distortions in the orange gem. The city has a glow effect applied that’s intense during nighttime and turned down during the day. I created a few simple post effects in the past, but this time I decided to take it further and play with effects like bloom and depth of field.

One thing that’s important to remember is that many advanced effects require more than one pass, and sometimes you need to render the scene several times to different textures, blending them together at the final pass. For this reason, those effects may appear very complex, but when broken up into individual steps become much easier to understand and manage.

Sound with the Web Audio API and WebRTC

In the same way that WebGL is perfect for rendering visuals, the Web Audio API is a great tool to play and process sound. It’s easy to build complex setups by connecting nodes that have different functions.

Streaming sound

My first lesson was to not load sound files using XMLHttpRequest. This will prevent the browser from streaming the file; instead, it will wait until the entire file is downloaded before it starts playing it. A much better alternative is to dynamically create an <audio> element and assign a src attribute to it. When the play() method is called on this object, it will start playing and streaming. To create a Web Audio node from an <audio> element, just use the audioContext.createMediaElementSource(audioElement) method.

Analyzing sound spectrum

To analyze the sound and detect beats, I used this excellent article by Felix Turner as a guide. Thanks to the Web Audio API modular system, it’s easy to configure sound analysis and even switch the audio source in real time between an MP3 file and the microphone. Of course, when using the microphone, it’s a good idea to disable the sound output to avoid a feedback loop.

Interaction with LeapJS

Smoothing the data

The Leap Motion JavaScript API is very easy to use, so the setup didn’t take too much time. However, the data that comes through often has a high degree of noise. Anyone who has been playing with accelerometer data or GPS in mobile phones, or a depth camera, knows that this is quite normal – the raw input from those sensors is usually pretty unstable. There are many strategies to smooth it out, but the simplest one is to create an easing function that runs in a loop, such as:

var raw, eased, easingFactor = 0.1;

// invoked when new data arrives,

// like ex. the position of the hand has changed

function onData() {

raw = sensor.getSomeNoisyValue();

}

// invoked on every frame, i.e. ~60fps

function loop() {

eased = (raw - eased) * easingFactor;

}

This way, we get the eased variable that has much less noise than the raw input. Depending on the easingFactor, the value will be eased less or more. Be careful though – the smaller the easingFactor, the slower the reaction time, so if you ease out too much, the application may become not responsive to the input. In the project source code, you’ll find a wrapper I wrote in order to smooth the raw input data from the device.

Checking for open/closed fingers

In one of the demos – the colorful ball made of lines – I wanted to change the visual based on how open/closed the hand of the user is. The idea was that the user should be able to squeeze the ball and then release it. For this, I needed to check all the fingers and find a way to determine their position.

One way of doing this is to check the dot product of each finger’s direction vector and the vector pointing downwards. The dot product will return 1 if the finger is pointing downwards, and will gradually move towards -1 as the user lifts up their finger. The more the fingers point downwards, the more the user’s hand is closed.

However, one important consideration here is that the Leap Motion Controller will stop detecting a finger when it’s too close to the palm. For this, I assume the user has five fingers. All of the missing fingers are considered as closed and contribute to the overall level of how closed the hand is.

Final thoughts

The Leap Motion Controller is an interesting input device to use in all kinds of experimental setups. It’s easy to use for the users – as easy as waving their hand and moving their fingers. Depending on the application that you want to build, however, the raw data coming from the device can require some further processing to get good results.

A setup where the user can interact with a large projection on a wall is exciting because of the differences in scale. A small movement of the hand can result in effect and animations on a huge scale. Imagine a Leap Motion device connected to a projection mapping where the user can displace elements of an entire building with a single finger.

I’d love to hear what you think about this project and its live demo. If you’d like to ask me any questions or share your thoughts, post in the comments below.

Bartek Drozdz is the Creative Director at Tool of North America. You can check out more of his recent work at bartekdrozdz.com, follow him on Twitter @bartekd, or email bartek@toolofna.com.

[…] Want to build the next great 3D app? Get a running start with our brand-new Boilerplate asset for Unity – featured today on Developer Labs. Plus, a new WebGL demo that lets you control five different music-driven visuals. […]

May 22, 2014 at 5:53 amWhat is the music?

http://bartekdrozdz.com/project/soundviz

November 4, 2015 at 3:35 am“S.P.Y.” by Codex Machine

November 4, 2015 at 9:23 am