Leap Motion is a company that has always been focused on human-computer interfaces.

We believe that the fundamental limit in technology is not its size or its cost or its speed, but how we interact with it. These interactions define what we create, how we learn, how we communicate with each other. It would be no stretch of the imagination to say that the way we interact with the world around us is perhaps the very fabric of the human experience.

We believe that this human experience is on the precipice of a great change.

The coming of virtual reality has signaled a great moment in the history of our civilization. We have found in ourselves the ability to break down the very substrate of reality and create ones anew, entirely of our own design and of our own imaginations.

As we explore this newfound ability, it becomes increasingly clear that this power will not be limited to some ‘virtual world’ separate from our own. It will spill out like a great flood, uniting what has been held apart for so long: our digital and physical realities.

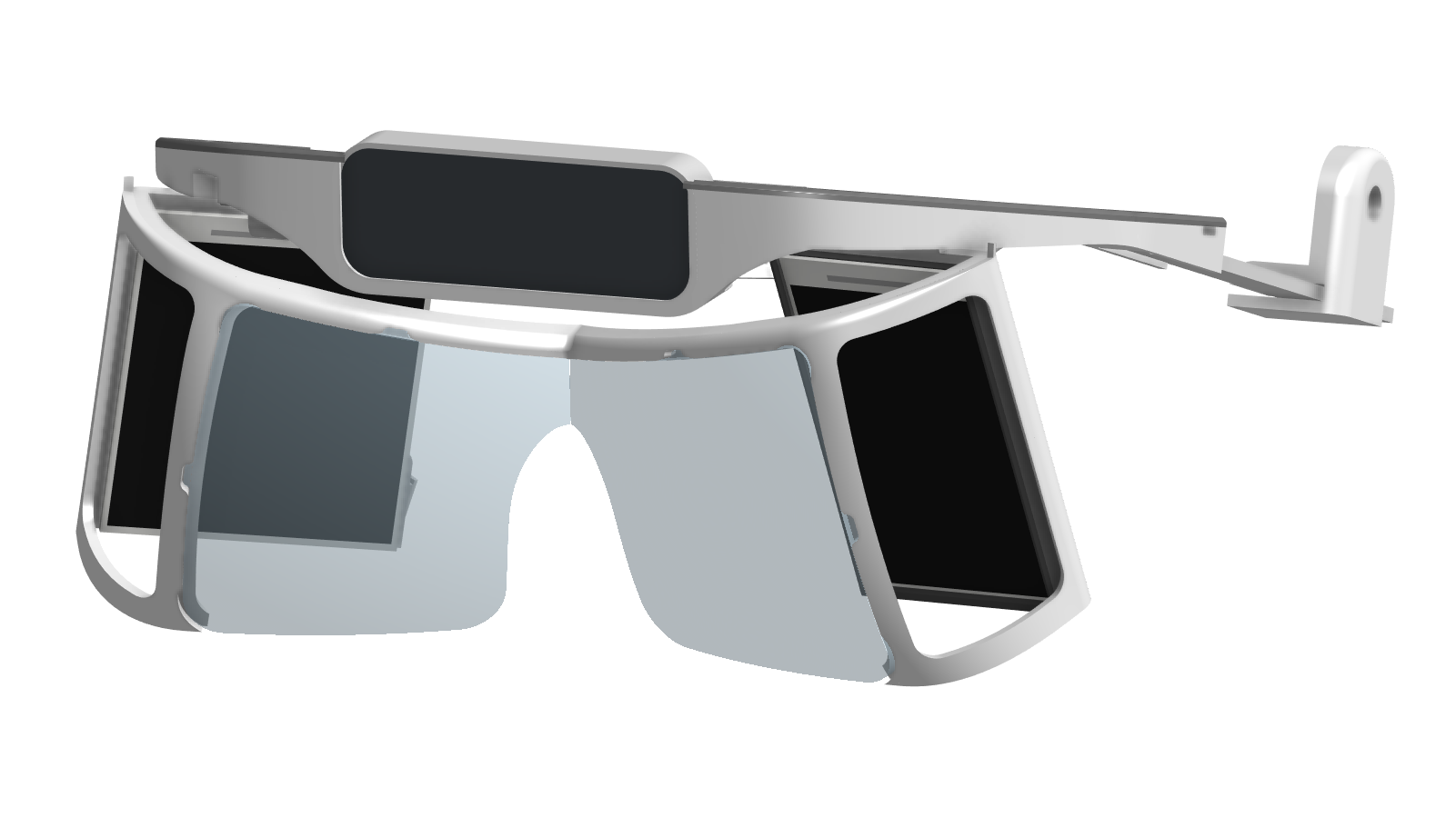

In preparation for the coming flood, we at Leap Motion have built a ship, and we call it Project North Star.

Read More ›