In yesterday’s post, I talked about the need for 3D design tools for VR that can match the power of our imaginations. After being inspired by street artists like Sergio Odeith, I made sketches and notes outlining the functionality I wanted. From there I researched the space, hoping that someone had created and released exactly what I was looking for. Unfortunately I didn’t find it; either the output was not compatible with DK2, the system was extremely limited, the input relied on a device I didn’t own, or it was extremely expensive.

Above: one of my early sketches.

When I started designing this project, I chose the Playstation Move controller as my input device. It looked good on paper, but once I started working with it, I quickly realized that most of my time would be spent just trying to get the device to properly communicate with Unity and pair with the OS rather than having fun making stuff.

So I switched to the Leap Motion Controller and quickly got my hands in my application. Things went fast from here. All of the basic functions (drawing, changing color, changing size, and changing shape) were assigned to physical buttons in my original design, so I simply mapped each of those functions to a curl+ release of different fingers on the user’s hands. I did this because detecting whether or not a finger is extended is simple and robust, and it worked fine in limited user tests (three people).

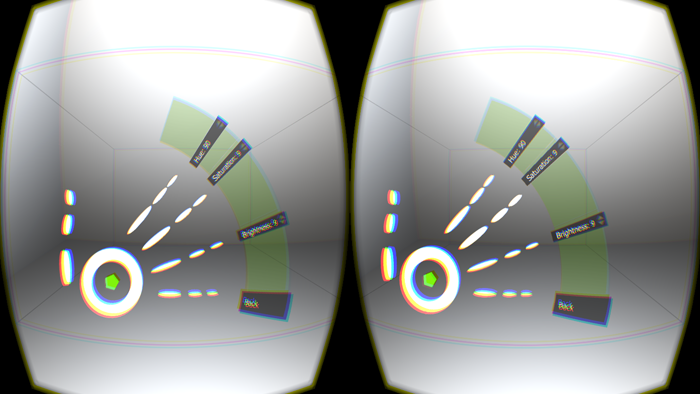

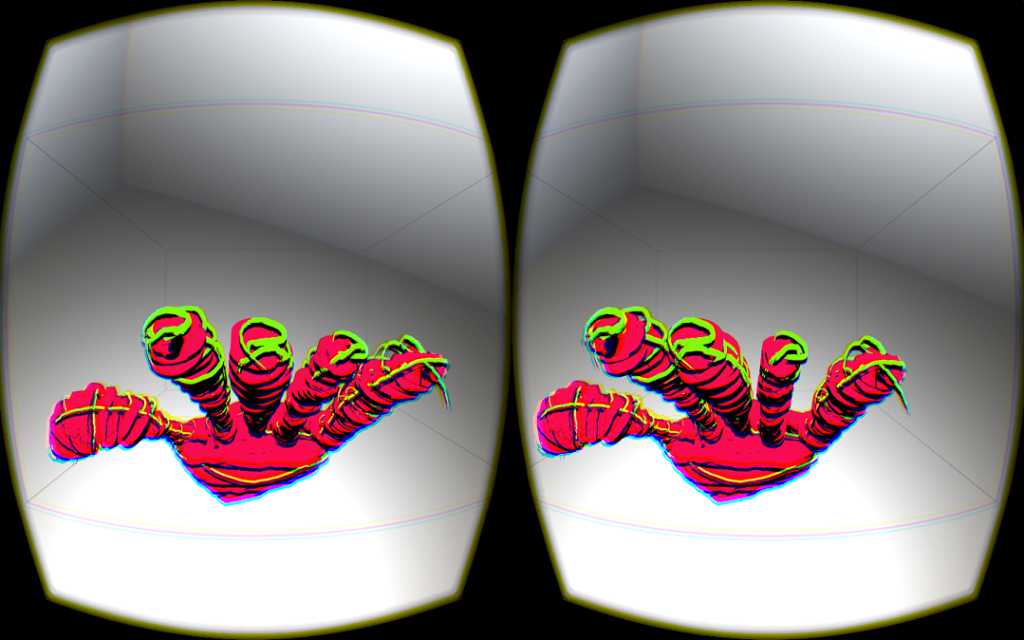

Pinching the thumb of the right hand triggered drawing, pinching the thumb of the left hand changed the color, curling the index finger changed brush size, and curling the middle/ring/pinky fingers changed the brush shape. Here’s what it looked like in regular usage:

After putting it out in the wild, I found out some people were running into issues like the triggers accidentally going off as they were moving their hands out of view. Users were also telling me that they needed access to the “secondary” functions like undoing and clearing the canvas while away from the keyboard, which posed a much bigger problem because my system couldn’t accommodate an arbitrary number of settings.

I knew I needed to adopt a menu system of some sort. The new Arm HUD Widget by Leap Motion looked good, but I knew it wouldn’t be released for some time. Then I discovered Hovercast.

Hovercast

Hovercast opened up a world of possibilities within my application. Before integrating it, I didn’t quite appreciate how much the settings interactions impacted the utility of the application. Under the old paradigm, users were expected to specify drawing colors in a text file before opening the application (so they could cycle through them with by curling their left thumb). As a result, the colors rarely got modified by anyone; users just seemed to default to whatever presets came with the app. Now people can control the precise output color while in the game using sliders in Hovercast (that I mapped to hue, saturation, and brightness).

The novelty of seeing the menu at your fingertips seemed to be enough to entertain some people. They would sit and happily poke buttons for minutes before trying to draw anything.

Lessons

I’ve learned so much since I jumped into this project that it’s hard to think of the most important lessons along the way. A lot of them are personal realizations surrounding marketing, versioning, QA testing, and generally being a “one man shop” for this application. For example, I released an “update” early on that horribly crippled the framerate of the application. After that, I started using the Unity performance analyzer to look at the number of draw calls made each frame during high load before releasing builds.

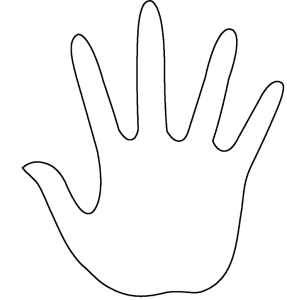

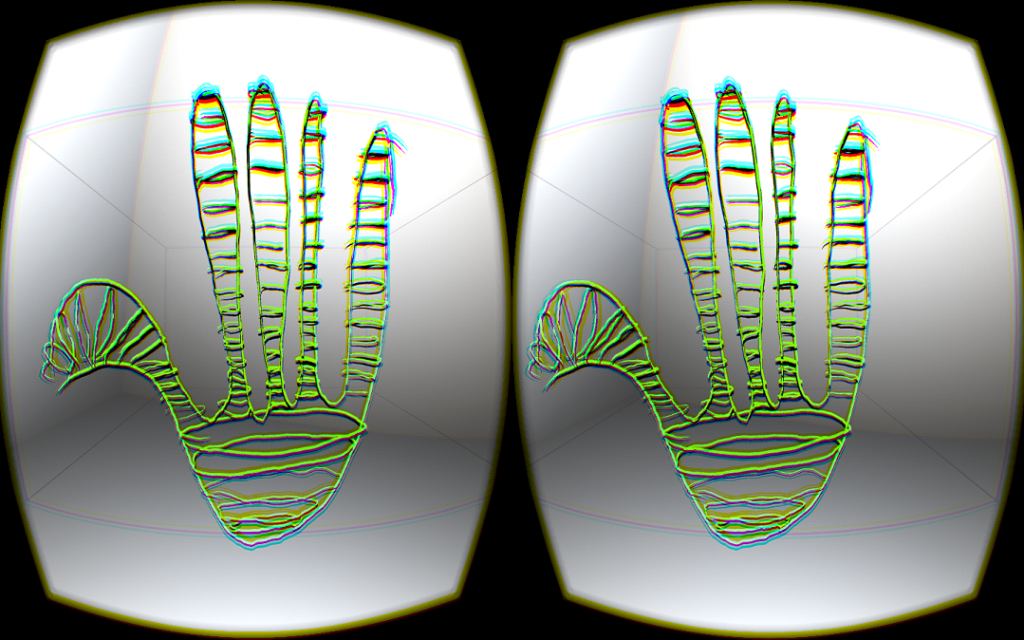

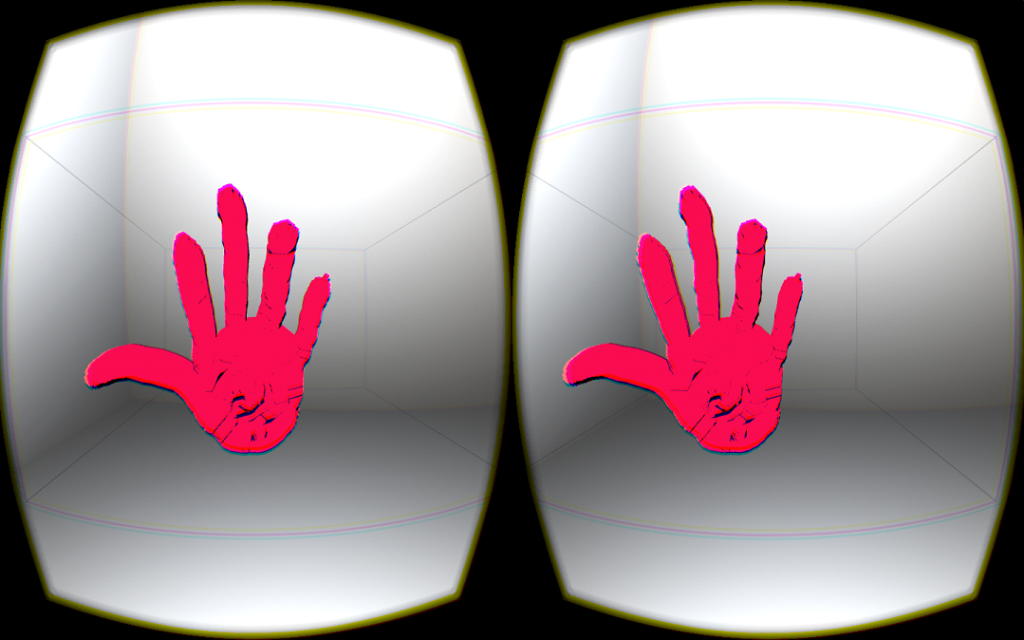

Some of the most fun lessons I’ve learned so far have related to the basics of 3D drawing in general. I’ve learned that my usual approach to drawing stuff in 2D (using outlines of cross sections of the object I want to depict) is inadequate for 3D. I have realized that I have to think in volumes more than outlines. For example, here’s how I would normally draw a hand in 2D:

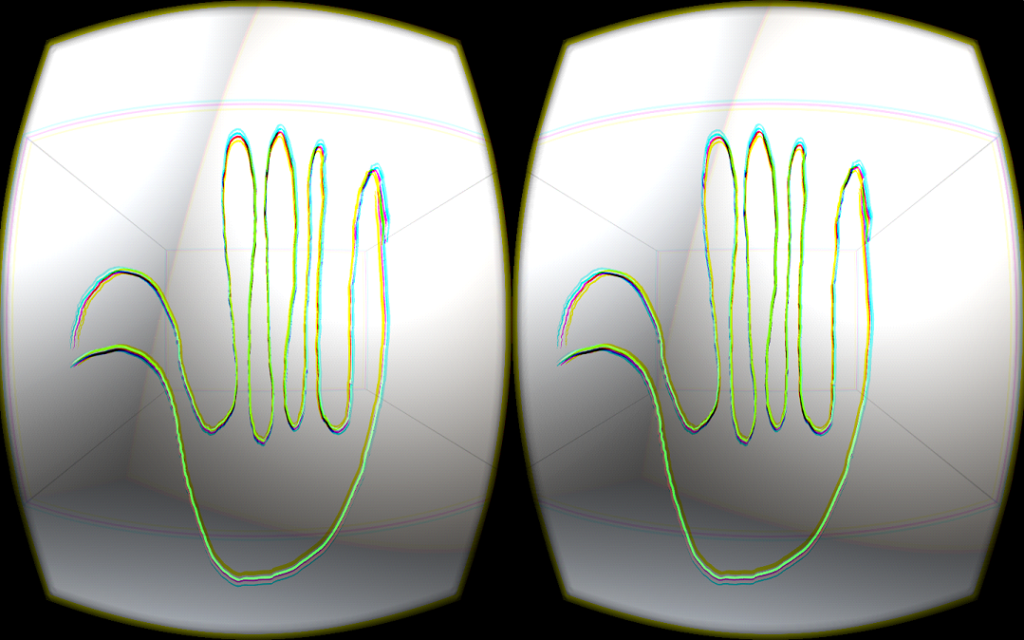

Looks great! But if I drew this in Graffiti 3D, I’d end up with something like this:

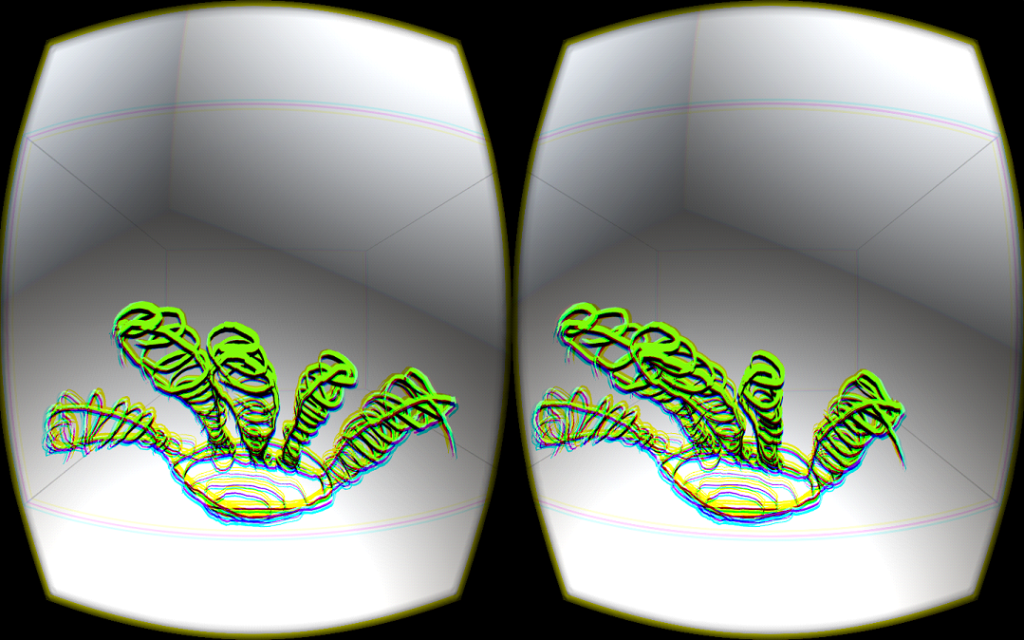

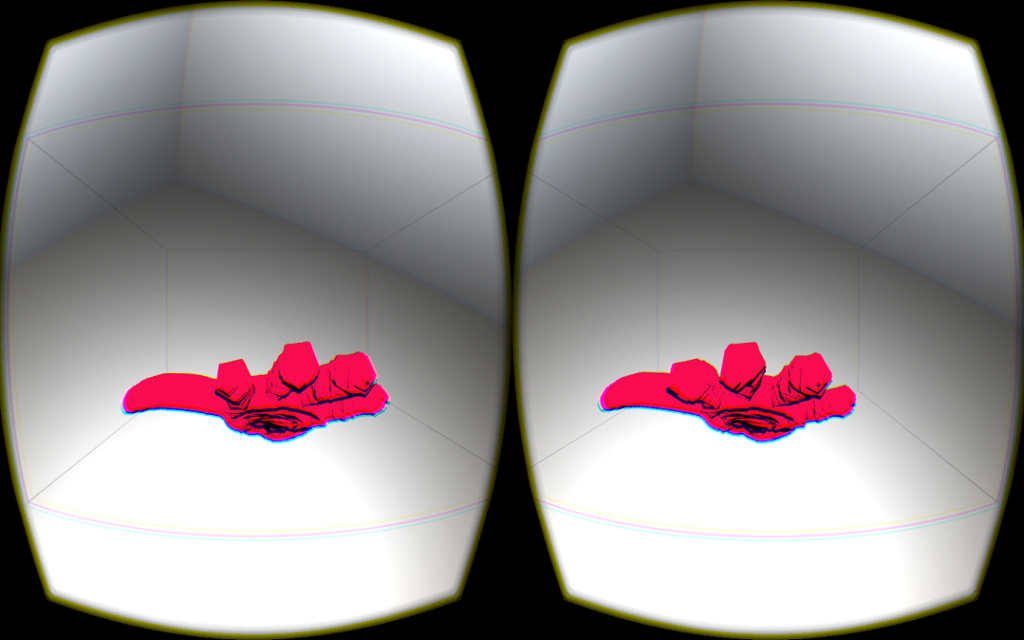

My initial expectation was that the best way to draw precisely in 3D would be to start with a cross-section like usual, and then draw perpendicular cross sections to “fill out” the volume. That isn’t too bad, but it’s extremely labor intensive and ends up looking a bit shaky, sloppy, and inconsistent.

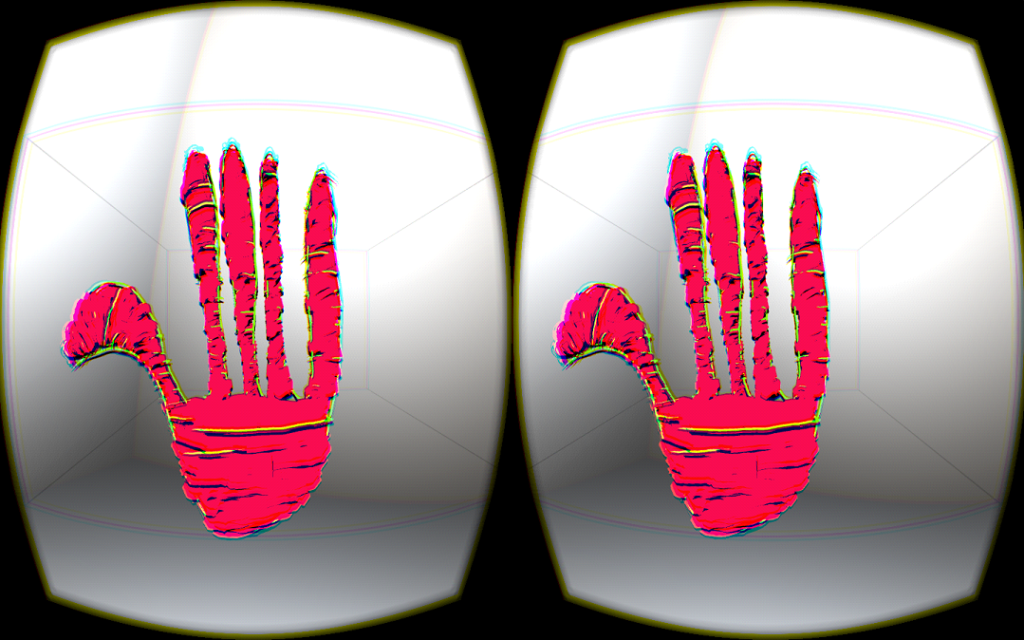

The above hand didn’t turn out too bad (although my initial outline could have been much better). However, it took me a few minutes of careful drawing to create it and the result was very scribbly and noisy. If, instead of focusing on cross sections, I focused on filling the space that would be taken up by the fingers and thickness of the hand, I could produce something much better looking much faster. This is what I did in about 30 seconds with a brush the width of the fingers (so each finger is one brush stroke):

A bit better, and much faster than the first one. I’m not used to thinking like this for 2D art because I don’t have dynamic control over the width of the pencil tip when I’m doodling on paper. Paintbrushes of varying thicknesses are probably the best 2D analog to this.

I’m still not an expert at drawing in 3D, but I’m getting a lot better. There’s a lot of new muscle memory that is required for making the most clean, expressive strokes and “gorilla arm” is a challenge early on. My arm is getting less tired the more I use it though.

Some of the issues I have with doing detailed work are due to limitations in the current generation of Leap Motion hardware – the tracking volume is small, and hand pose estimation breaks up near 3D objects in space – but it is surprisingly precise, low latency, and solid. Others issues are due to limitations in my human hardware; sometimes I just can’t hold my arm still enough to get super fine details correct. I think over time with practice that will get easier.

Designing with Leap Motion

I’ve learned some good lessons about working with the Leap Motion Controller while developing this project. Overall, the process of getting up and running with the device was very straightforward, because the Unity assets can be dropped into a project and immediately start working, and the documentation is great. Where I did run into issues was in utilizing more experimental functions, such as the passthrough quads.

When I began working with passthrough mode in my application, I ran into some issues properly sizing and placing the passthrough quads (the surfaces that display the sensor image to each of the user’s eyes) so that the image aligned with the user’s in game hands. I eventually solved the problem after some trial and error, and I hope my forum post about it helps other people avoid wasting time to figure it out.

Passthrough mode also occasionally caused the user’s virtual hands to misalign with the sensor image. It was one of those bugs that would rear its head at the worst times (such as while demoing to friends/family) and then disappear later when I was trying to reproduce it. Ultimately, someone pointed me to a thread on the Leap Motion forums illuminating that this problem is caused by “robust mode” kicking in sometimes and the resolution of the sensor image changing, so the solution was just to disable robust mode in the Leap Motion Control Panel. (Also, don’t forget to enable “allow images” before experimenting with AR passthrough, or you’ll end up just seeing a solid gray color when you enable the passthrough quads!)

What’s next?

This is a huge design space, and the tricky part is choosing what to work on next. Users have suggested things ranging from refining the tool to be more like “the Photoshop of 3D” to incorporating the drawing function into some sort of game mechanic like this.

At this stage, I’m most interested in keeping the application simple and focused on freehand 3D art while making it more useful and breathtaking. The dynamic mesh generation algorithm isn’t as smooth as it can be, but I’ve avoided a lot of post-processing for now in the interest of performance. While users can only currently paint with different colors of a “toon shader” material (meant to mimic cell shading), you might imagine painting with custom materials including wood, porcelain, glass, metal, drippy goo, rock, smoke, skin, grass, etc.

The possibilities really get crazy when you consider all of the effects and structures that can be generated procedurally in a game engine like Unity. Users could release glowing worms that oscillate in the direction of the instantaneous velocity of their movement and pulse colors to the music they’re listening to. Or fill a volume with vegetation that grows over time. Or use a “city” brush to draw roads and watch buildings grow around them.

Following this train of thought, this tool could be useful for large scale map/space creation in addition to small-scale sculpting. Projects like Paint 42 that include an option to enlarge/resize meshes have me extremely excited because they demonstrate the architectural possibilities of this idea. Users can draw small columns and turn them into skyscrapers with the push of a button. I don’t think that’s ever been possible for anyone but the lucky few designers who had access to good VR tech before this recent boom occurred.

Vitally, Graffiti 3D needs to be freed from the cables and positional head tracking camera FOV. Walking freely around a piece, in a fully interactive AR room, unobstructed by tracking range or cables, is going to feel like something else. From there, people’s creations need to be tied to the real world. We should be able to freely modify our spaces by leaving virtual 3D material anywhere. If I’m walking through a park and I think a gazebo or sculpture would be beautiful somewhere, I should have the ability to draw it there for anyone to see.

These are just a few of the thoughts swirling in my head about what the future could hold for this application (and similar ones). It’s an exciting time to be alive.

Epilogue: Graffiti 3D on Twitch!

Recently, Scott joined us on our Twitch channel to talk about the development and vision behind Graffiti 3D, and where the VR art community is going in the years ahead:

For cutting-edge projects and demos, tune in every Tuesday at 5pm PT to twitch.tv/leapmotiondeveloper. To make sure you never miss an episode, enter your email address to subscribe to up-to-the-minute Twitch updates:

[…] Next up: Building Graffiti 3D: A Journey through Space and Design […]

March 31, 2015 at 6:43 am