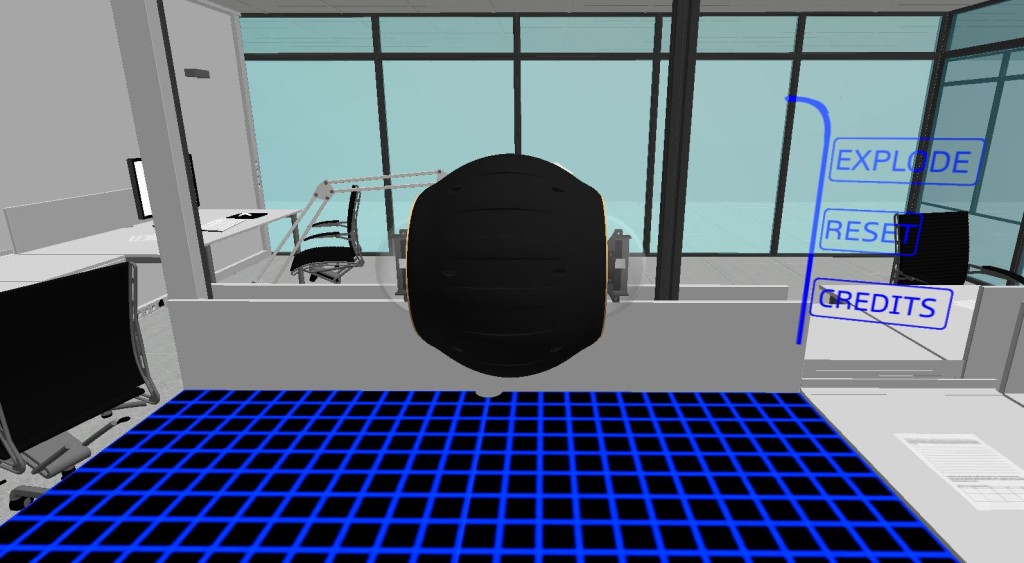

What if you could disassemble a robot at a touch? Motion control opens up exciting possibilities for manipulating 3D designs, with VR adding a whole new dimension to the mix. Recently, Battleship VR and Robot Chess developer Nathan Beattie showcased a small CAD experiment at the Avalon Airshow. Supported by the School of Engineering, Deakin University, the demo lets users take apart a small spherical robot created by engineering student Daniel Howard.

Nathan has since open sourced the project, although the laboratory environment is only available in the executable demo for licensing reasons. Check out the source code at github.com/Zaeran/CAD-Demo.

What’s the story behind your 3D CAD demo?

I got my Leap Motion Controller at the start of 2014, and quickly went about making demos. My first major project was Battleship VR, which was intended to investigate UI interaction with finger tracking. It can still be found on Oculus Share, although it’s quite old now. Aside from this, my major work with Leap Motion is my game Robot Chess, a relatively simple game which allows you to use your hands to pick up and move around chess pieces as you play against a robotic AI opponent. I’ve also built a couple of smaller proof-of-concept pieces that people may have seen on the leapmotion or oculus subreddits.

Last year, I finished my IT undergrad at Deakin University, majoring in software development. I’m currently doing my honours, researching the feasibility of VR and haptics for midwifery training, using Deakin’s upcoming Virtual Reality Lab. I’ll also be building fun demos for their CAVE (cave automatic virtual environment) throughout the year. My current goal is to create an immersive fishing simulator, for when uni gets a bit too stressful!

Do you have any UX or design tips for developers looking to create similar experiences?

That’s a pretty broad question. Overall, I’d say to keep it simple. You don’t need fancy gestures or over-the-top animations, as it just complicates things for the average user. When it comes to grabbing things, the biggest issue I found was having objects that were large enough to be grabbed accurately, while at the same time allowing the entire model to fit in the workspace. I opted not to use magnetic pinch though, so grabbing accuracy was a much bigger deal as the pinch location had to be within an object’s bounding box.

When it comes to menus, I’m a pretty big fan of making buttons that feel responsive when you touch them, so personally I’d suggest a simple fade-in/fade-out with a bit of movement, as you don’t want your menus to take focus away from the main piece.

What do VR and hand tracking each add to the experience of seeing and disassembling a thing in mid-air?

The VR side of things puts the model directly in front of the user in 3D space, so they can easily see its true scale compared to viewing diagrams, or a rendering on a 2D screen. We found that by being able to view and manipulate the model in VR, there is a better understanding of how an object is put together, and potentially how it functions, depending on the detail of the model.

It also allows users to safely disassemble and observe objects which may be hazardous in the real world, or at high risk of breaking when being handled by inexperienced users. Furthermore, by using technologies such as VR and hand tracking, inexperienced users are able to easily pick up how to manipulate objects in the virtual space, and can learn about how components may fit together far easier than viewing a regular diagram.

It also allows users to safely disassemble and observe objects which may be hazardous in the real world, or at high risk of breaking when being handled by inexperienced users. Furthermore, by using technologies such as VR and hand tracking, inexperienced users are able to easily pick up how to manipulate objects in the virtual space, and can learn about how components may fit together far easier than viewing a regular diagram.

What are the creative and design possibilities that a CAVE represents?

The most obvious benefit of a CAVE from a design perspective is the ability to walk around a 1:1 scale model of the object you are designing, potentially saving a lot of time and money by finding errors in the design before making physical prototypes. For example, you can walk through a building before it’s built, or test out the design of a new car without requiring clay modelling or scale models to be built. While these things can be done on consumer VR headsets, the CAVE provides a much higher graphical fidelity, and also benefits from being a multi-user experience.

Also, as CAVEs usually come with a form of tracking system (similar to motion capture), one concept I’m incredibly interested in is the ability to build and sculpt objects with your own hands at a high level of precision, instead of having to try and build something using a regular 2D interface and try to accurately model fine detail. Combined with a large-scale haptic device, you can actually reach out and touch a completely virtual object.

What will prototyping and design look like in 10 years?

The best example I can give is the short film World Builder by Bruce Branit.

From the moment I saw it, it’s been my go-to example of how CAD software will look in the future. It’s actually interesting to see that a few of the UI concepts in this film have inspired Leap Motion assets. All we’re really waiting for at this stage is a way to accurately track hand and finger movement in a space of a few cubic meters. Once that tech comes around, the floodgates are opened, and fields such as modelling, design, and prototyping become a lot more simple and fun.

However, if I had to give specific points:

- High-end 3D design studios will make use of fully virtual environments, even potentially having dedicated room to VR and body tracking.

- The ability to create and sculpt objects with your hands.

- Collaboration – multiple people working in the same environment simultaneously.

What do you think of Nathan’s demo, and where will 3D design go next? Let us know in the comments, tweet Nathan @Zaeran, or check out his new website at nbvr.com.au.