Virtual reality. Augmented reality. Mixed, hyper, modulated, mediated, diminished reality. All of these flavours are really just entry points into a vast world of possibilities where we can navigate between our physical world and limitless virtual spaces. These technologies contain immense raw potential, and have advanced to the stage where they are pretty good, and […]

// UX Design

At Leap Motion, we’re always looking to advance our interactions in ways that push our hardware and software. As one of the lead engineers on Project North Star, I believe that augmented reality can be a truly compelling platform for human-computer interaction. While AR’s true potential comes from dissolving the barriers between humans and computers, […]

VR, AR and hand tracking are often considered to be futuristic technologies, but they also have the potential to be the easiest to use. For the launch of our V4 software, we set ourselves the challenge of designing an application where someone who has never even touched a VR headset could figure out what to […]

At Leap Motion, we envision a future where the physical and virtual worlds blend together into a single magical experience. At the heart of this experience is hand tracking, which unlocks interactions uniquely suited to virtual and augmented reality. To explore the boundaries of interactive design in AR, we created Project North Star, which drove […]

Leap Motion is a company that has always been focused on human-computer interfaces. We believe that the fundamental limit in technology is not its size or its cost or its speed, but how we interact with it. These interactions define what we create, how we learn, how we communicate with each other. It would be […]

There’s something magical about building in VR. Imagine being able to assemble weightless car engines, arrange dynamic virtual workspaces, or create imaginary castles with infinite bricks. Arranging or assembling virtual objects is a common scenario across a range of experiences, particularly in education, enterprise, and industrial training – not to mention tabletop and real-time strategy […]

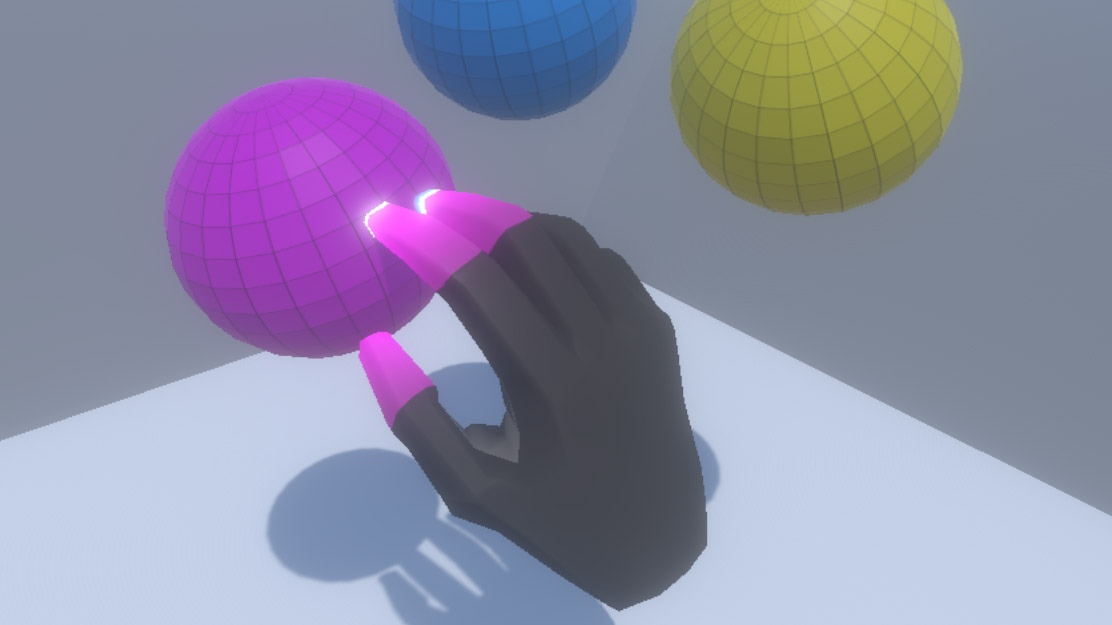

When you reach out and grab a virtual object or surface, there’s nothing stopping your physical hand in the real world. To make physical interactions in VR feel compelling and natural, we have to play with some fundamental assumptions about how digital objects should behave. The Leap Motion Interaction Engine handles these scenarios by having […]

As mainstream VR/AR input continues to evolve – from the early days of gaze-only input to wand-style controllers and fully articulated hand tracking – so too are the virtual user interfaces we interact with. Slowly but surely we’re moving beyond flat UIs ported over from 2D screens and toward a future filled with spatial interface paradigms that take advantage of depth and volume.

Last time, we looked at how an interactive VR sculpture could be created with the Leap Motion Graphic Renderer as part of an experiment in interaction design. With the sculpture’s shapes rendering, we can now craft and code the layout and control of this 3D shape pool and the reactive behaviors of the individual objects.

By adding the Interaction Engine to our scene and InteractionBehavior components to each object, we have the basis for grasping, touching and other interactions. But for our VR sculpture, we can also use the Interaction Engine’s robust and performant awareness of hand proximity. With this foundation, we can experiment quickly with different reactions to hand presence, pinching, and touching specific objects. Let’s dive in!