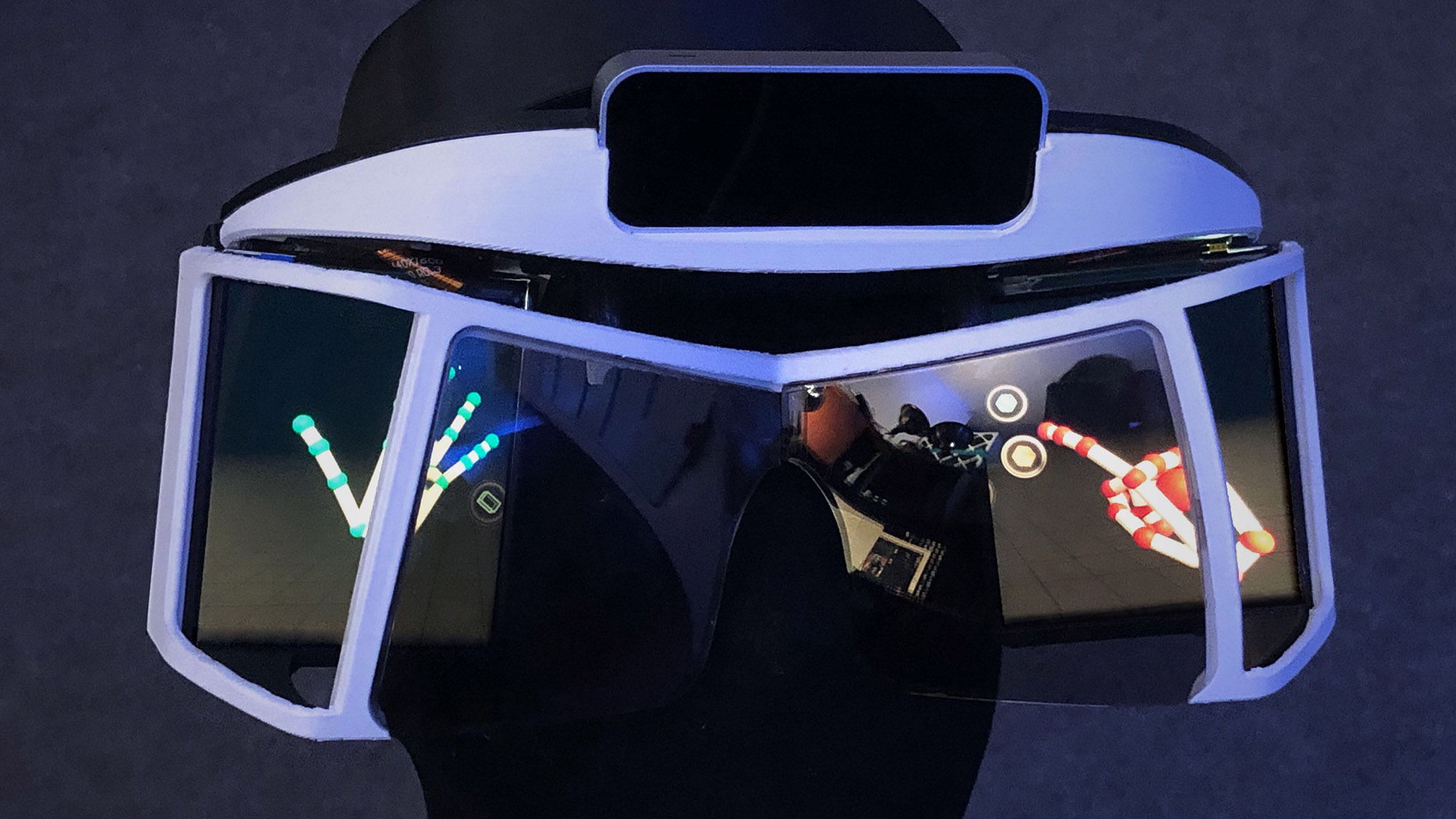

Building the world’s most advanced augmented reality headset isn’t exactly for beginners. But at the world’s first #BuildYourNorthStar workshop, over 20 participants built their own open-source Project North Star headsets in just 48 hours – using components now available to everyone.

The future of open source augmented reality just got easier to build. Since our last major release, we’ve streamlined Project North Star even further, including improvements to the calibration system and a simplified optics assembly that 3D prints in half the time. Thanks to feedback from the developer community, we’ve focused on lower part counts, minimizing support material, and reducing the barriers to entry as much as possible. Here’s what’s new with version 3.1.

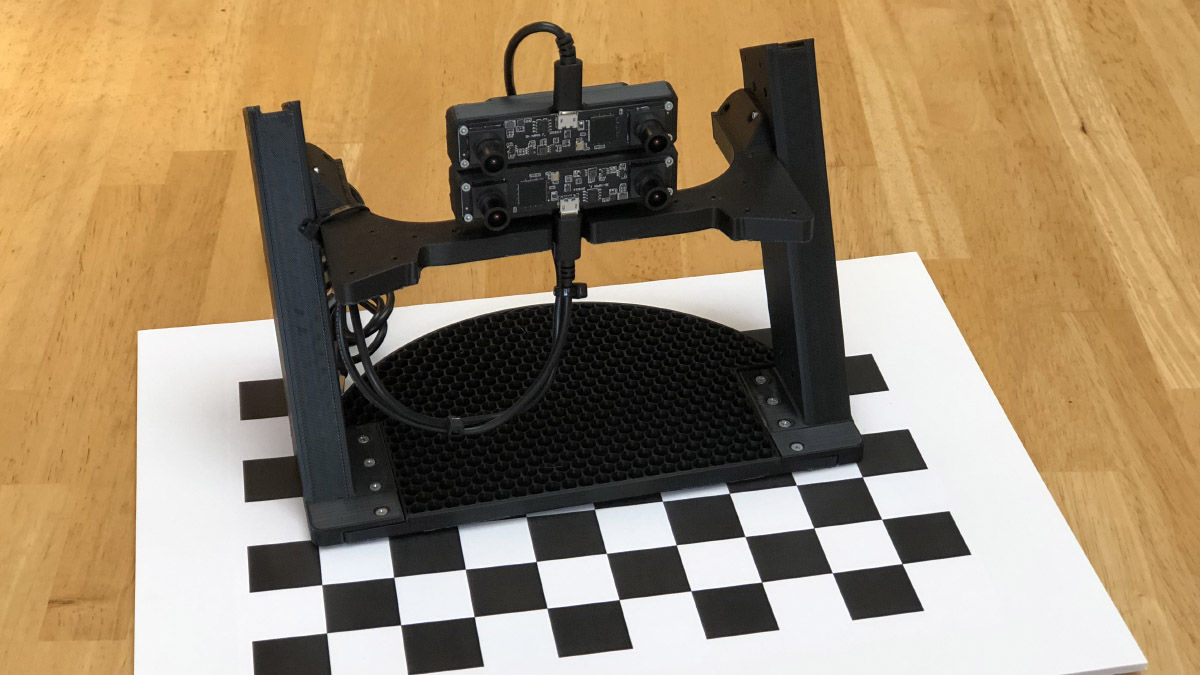

Introducing the Calibration Rig

As we discussed in our post on the North Star calibration system, small variations in the headset’s optical components affect the alignment of the left- and right-eye images. We have to compensate for this in software to produce a convergent image that minimizes eye strain.

As we discussed in our post on the North Star calibration system, small variations in the headset’s optical components affect the alignment of the left- and right-eye images. We have to compensate for this in software to produce a convergent image that minimizes eye strain.

Before we designed the calibration stand, each headset would need to have its screen positions and orientations manually compensated for in software. With the North Star calibrator, we’ve automated this step using two visible-light stereo cameras. The optimization algorithm finds the best distortion parameters automatically by comparing images inside the headset with a known reference. This means that auto-calibration can find best possible image quality within a few minutes. Check out our GitHub project for instructions on the calibration process.

Mechanical Updates

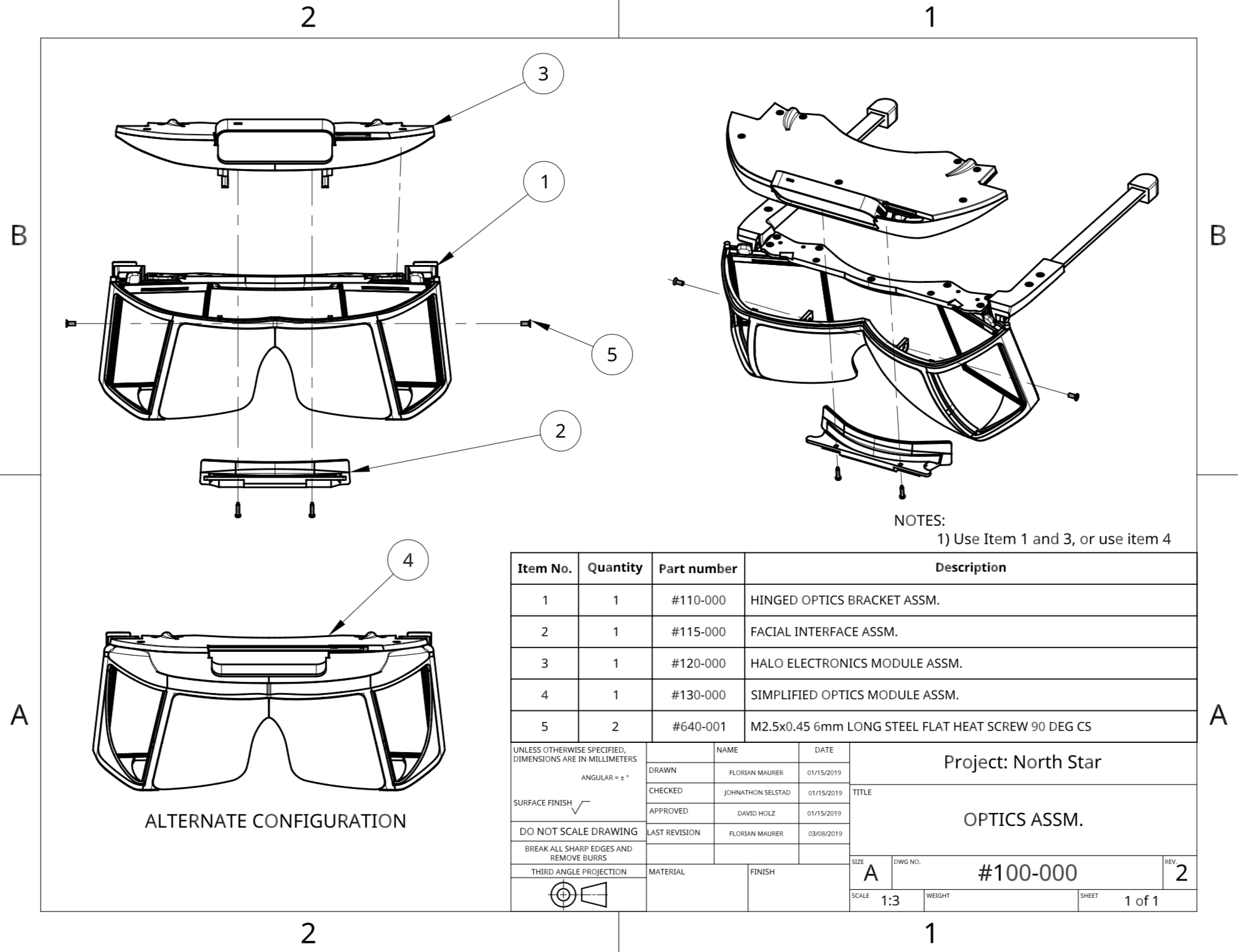

Building on feedback from the developer community, we’ve made the assembly easier and faster to put together. Mechanical Update 3.1 introduces a simplified optics assembly, designated #130-000, that cuts print time in half (as well as being much sturdier).

The biggest cut in print time comes from the fact that we no longer need support material on the lateral overhangs. In addition, two parts were combined into one. This compounding effect saves an entire workday’s worth of print time!

Left: 1 part, 95g, 7 hours, no supports. Right: 2 parts, 87g, 15 hour print, supports needed.

The new assembly, #130-000, is backwards compatible with Release 3. Its components substitute #110-000 and #120-000, the optics assembly, and electronics module respectively. Check out the assembly drawings in the GitHub repo for the four parts you need!

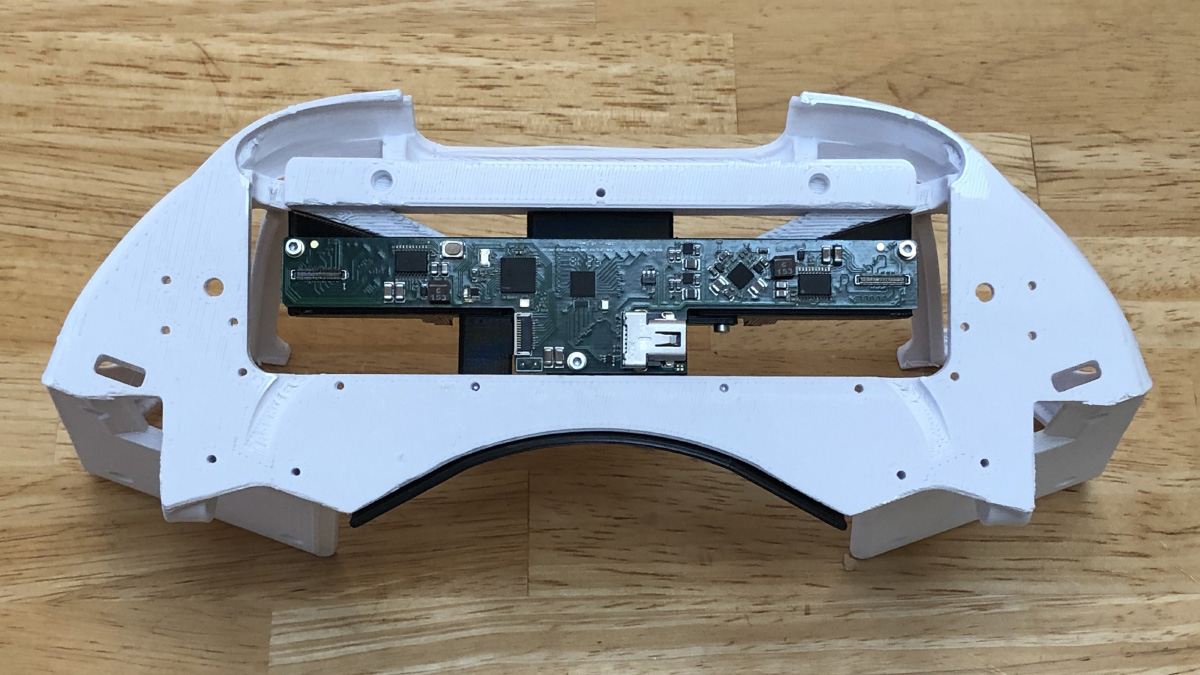

Cutout for Power Pins

Last but not least, we’ve made a small cutout for the power pins on the driver board mount. When we received our NOA Labs driver board, we quickly noticed the interference and made the change to all the assemblies.

This change makes it easy if you’re using pins or soldered wires, either on the top or bottom.

Want to stay in the loop on the latest North Star updates? Join the discussion on Discord!

Over the past few months we’ve hit several major milestones in the development of Project North Star. At the same time, hardware hackers have built their own versions of the AR headset, with new prototypes appearing in Tokyo and New York. But the most surprising developments come from North Carolina, where a 19-year-old AR enthusiast has built multiple North Star headsets and several new demos.

Over the past few months we’ve hit several major milestones in the development of Project North Star. At the same time, hardware hackers have built their own versions of the AR headset, with new prototypes appearing in Tokyo and New York. But the most surprising developments come from North Carolina, where a 19-year-old AR enthusiast has built multiple North Star headsets and several new demos.

Bringing new worlds to life doesn’t end with bleeding-edge software – it’s also a battle with the laws of physics. Project North Star is a compelling glimpse into the future of AR interaction and an exciting engineering challenge, with wide-FOV displays and optics that demanded a whole new calibration and distortion system.

Today we’re excited to share the latest major design update for the Leap Motion North Star headset. North Star Release 3 consolidates several months of research and insight into a new set of 3D files and drawings. Our goal with this release is to make Project North Star more inviting, less hacked together, and more reliable. The design includes more adjustments and mechanisms for a greater variety of head and facial geometries – lighter, more balanced, stiffer, and more inclusive.

Earlier this week, we shared an experimental build of our LeapUVC API, which gives you a new level of access to the Leap Motion Controller cameras. Today we’re excited to share a second experimental build – multiple device support.

Earlier this summer, we open sourced the design for Project North Star, the world’s most advanced augmented reality R&D platform. Like the first chocolate waterfall outside of Willy Wonka’s factory, now the first North Star-style headsets outside our lab have been born – in Japan.

Virtual reality. Augmented reality. Mixed, hyper, modulated, mediated, diminished reality. All of these flavours are really just entry points into a vast world of possibilities where we can navigate between our physical world and limitless virtual spaces. These technologies contain immense raw potential, and have advanced to the stage where they are pretty good, and pretty accessible. But in terms of what they enable, we’ve only seen a sliver of what’s possible.

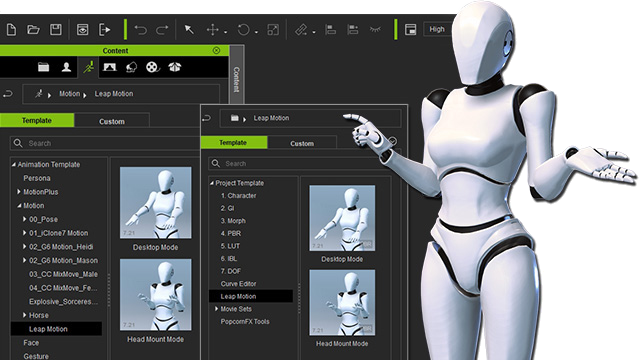

Animate from fingers to forearms with @LeapMotion and Reallusion iClone 7 for professional motion capture animation. Click To Tweet

This week we’re excited to share a new engine integration with the professional animation community – Leap Motion and iClone 7 Motion LIVE. A full body motion capture platform designed for performance animation, Motion LIVE aggregates motion data streams from industry-leading mocap devices, and drives 3D characters’ faces, hands, and bodies simultaneously. Its easy workflow opens up extraordinary possibilities for virtual production, performance capture, live television, and web broadcasting. This professional-grade package is available now at a special price for a limited time.